The Pre-College ROI Project | Issue 1 of 12

By Lindsey Kundel, Editor in Chief, InGenius Prep

Welcome to the Pre-College ROI Project

Editor’s Note

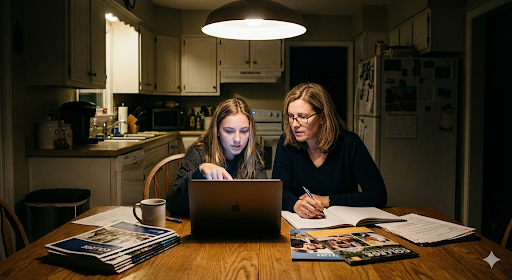

The pre-college landscape has changed rapidly over the past decade. Families today face an expanding array of summer programs, research opportunities, competitions, credentials, and “must-do” experiences—often accompanied by high costs, opaque claims, and mounting pressure to choose correctly.

The Pre-College ROI Project was created in response to that confusion.

This series is not a ranking of programs, nor is it a guide to “gaming” admissions. It does not promise outcomes or endorse specific pathways. Instead, it offers a framework for evaluating pre-college experiences more clearly—through the lens of readiness, development, and how admissions decisions are actually made.

Each installment applies the same set of criteria to a different category of opportunity, with the goal of helping families ask better questions, make proportionate choices, and protect students’ curiosity and wellbeing along the way.

We believe families deserve transparent, research-informed guidance without fear-based framing or hidden incentives. This journal is offered as a public resource, freely available to anyone navigating these decisions.

We hope it brings clarity where the process often feels overwhelming—and reassurance that thoughtful preparation matters more than polished packaging.

How to Use This Series

This series is designed to be read slowly, selectively, and over time.

Each installment examines a different category of pre-college opportunity using the same framework. You do not need to read every issue in order, nor do you need to agree with every conclusion for the series to be useful. The goal is not to replace judgment, but to sharpen it.

As you read, we recommend focusing on three questions:

- What assumptions am I bringing to this category?

Many pre-college decisions are shaped by reputation, anecdote, or urgency. This series is most useful when read with curiosity rather than confirmation in mind. - How does this apply to my student’s context?

No experience is inherently high- or low-value in isolation. Value emerges from alignment—between opportunity, access, interests, and developmental stage. - What tradeoffs am I making?

Time, energy, and wellbeing are finite. This framework is intended to help families weigh opportunity costs more intentionally, not to encourage doing more.

Each installment includes a mix of research, pattern-based insight, and practical tools. Some sections will feel immediately relevant; others may not. That is expected. Revisit the series at different moments—when planning summers, evaluating offers, or simply recalibrating expectations.

Above all, this project is meant to reduce pressure, not add to it. There is no single “right” path. There are only better-informed ones.

Executive Summary

Over the past decade, the pre-college landscape has expanded into a vast and increasingly opaque marketplace. What was once a modest ecosystem of enrichment programs has become a global industry offering summer institutes, research opportunities, competitions, leadership academies, certifications, and credentials—many of them expensive, aggressively marketed, and difficult for families to evaluate.

As admissions at selective universities have grown more competitive, families have felt mounting pressure to “do more.” In response, spending on pre-college programs has surged. Yet higher investment has not necessarily translated into better outcomes. Instead, many families over-invest in experiences that look impressive but offer limited developmental or admissions value, while under-investing in opportunities that meaningfully build readiness, depth, and confidence.

This mismatch is not the result of poor judgment. It is the product of structural forces: prestige bias, survivorship narratives, information asymmetry, and marketing sophistication that outpaces transparency. Program branding often obscures who is actually running an experience, how selective it truly is, and what kind of evidence it provides to admissions readers.

This white paper introduces The Pre-College ROI Project, a year-long research initiative designed to bring clarity to this space. Our goal is not to rank programs or promise outcomes. Instead, we offer a consistent framework for evaluating pre-college experiences based on how admissions decisions are actually made—and how students actually develop.

We define “return on investment” in admissions terms across four dimensions:

- Growth ROI – the extent to which an experience produces real academic, technical, or creative development

- Narrative ROI – whether it strengthens coherence and depth in a student’s academic story

- Credibility ROI – whether it is interpreted by admissions readers as trustworthy evidence of readiness

- Wellbeing ROI – whether it is sustainable, motivating, and developmentally appropriate

Using this framework, we will examine major pre-college categories throughout the year, identifying where value is commonly overstated, where it is misunderstood, and where high-impact opportunities exist at a wide range of price points.

This January installment lays the foundation. It maps the modern pre-college economy, explains why families often misread value, and introduces the analytical lens we will apply across the series. It also provides practical tools families can use immediately—before committing time, money, or energy.

In a crowded and emotionally charged market, clarity is a competitive advantage. This project is designed to help families find it.

Evidence Snapshot

This analysis draws on admissions trend data from the Common Application and NACAC, behavioral economics research on decision-making under uncertainty, publicly available admissions officer commentary, and longitudinal counseling observations across thousands of applications.

Section 1 — The Rise of the Pre-College Marketplace

1.1 From Enrichment to Positioning

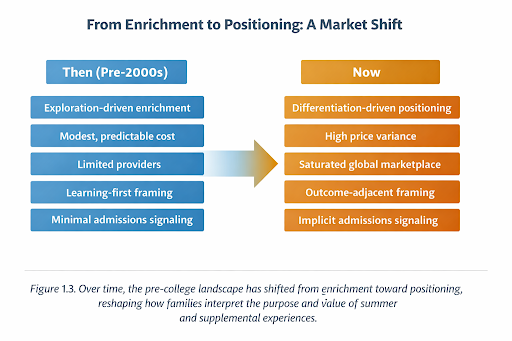

For much of the late twentieth century, pre-college programs functioned primarily as enrichment. Summer courses, camps, and academic experiences were framed as opportunities for exploration, curiosity, and personal growth. Participation was relatively limited, costs were modest, and outcomes were rarely discussed in admissions terms.

That landscape has changed.

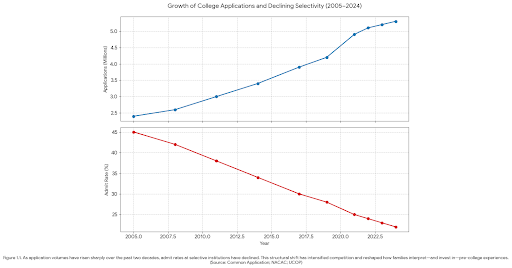

Over the past decade, application volumes at selective universities have increased dramatically, while acceptance rates have declined—particularly at highly selective institutions. According to data from the Common Application, total applications submitted through the platform have more than doubled since the early 2010s, with growth far outpacing increases in available seats at selective colleges (Common Application, 2023). Similar patterns are reflected in system-level data from large public universities, including the University of California Office of the President, where application volume growth has consistently outpaced enrollment expansion (UCOP, 2023).

Figure 1.1 The visual is composed of two charts sharing a common time axis (2005–2024):

- Top Chart: Shows the steady climb of total U.S. college applications, reaching 5.3 million in 2024.

- Bottom Chart: Illustrates the corresponding decline in admit rates at selective public institutions, dropping from 45% to 22% over the same period.

Caption: Figure 1.1. As application volumes have risen sharply over the past two decades, admit rates at selective institutions have declined. This structural shift has intensified competition and reshaped how families interpret—and invest in—pre-college experiences. (Source: Common Application; NACAC; UCOP)

As competition intensified, the purpose of pre-college experiences began to shift. What was once enrichment gradually became positioning. Families increasingly sought experiences not only for learning, but for differentiation—ways to stand out in a crowded applicant pool. Research from the National Association for College Admission Counseling has documented this shift, noting a steady rise in parental anxiety around competitiveness and a corresponding increase in strategic behavior earlier in students’ academic careers (NACAC, 2023).

This change reshaped the market. New providers entered. Existing programs expanded. Entire categories emerged around perceived admissions advantage. “Summer” became the most saturated season, combining urgency, availability, and narrative visibility on applications—particularly because summer activities are easy to list, easy to brand, and easy to market as transformational.

Today’s pre-college ecosystem includes:

- University-run programs

- Nonprofit organizations

- Private education companies

- Hybrid entities that combine elements of all three

The result is not simply more choice, but more confusion—especially in the absence of standardized definitions, disclosures, or outcomes reporting.

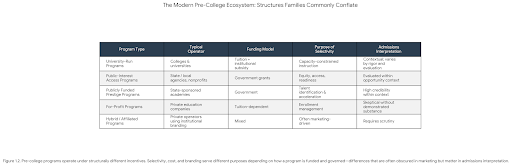

1.2 The Blurred Line Between Public, Nonprofit, and For-Profit Programs

One of the defining features of the modern pre-college marketplace is how difficult it has become for families to understand what kind of organization they are engaging with.

Many programs use naming conventions that imply public mission or academic authority—“institutes,” “centers,” “foundations,” or “academies”—regardless of their underlying structure. Others describe themselves as “university-hosted” or “university-affiliated” without being designed, taught, or evaluated by university faculty. Investigations into the growth of auxiliary education programs have noted that branding language frequently outpaces formal oversight or instructional control (U.S. Department of Education, 2022).

At the same time, tuition-funded programs have proliferated rapidly, often adopting selective language, competitive framing, and tiered admissions processes. In some cases, nonprofit status or public-facing missions coexist with revenue-driven growth models that depend on enrollment volume, upselling, or brand expansion. Research on the “shadow education” market—particularly in the U.S. and East Asia—has documented how tuition-based enrichment increasingly mirrors admissions pressure rather than educational need (Bray, 2017).

The issue is not that programs charge money. The issue is that families are often unable to tell what kind of organization they are engaging with—and what incentives shape its promises.

This lack of transparency makes it difficult to assess value. When branding obscures structure, families are left to rely on proxies—logos, location, name recognition, or anecdotal outcomes—rather than on substance. NACAC has repeatedly flagged this information asymmetry as a growing challenge for families navigating pre-college options without institutional guidance (NACAC, 2023).

Figure 1.2 The visual renders the comparison between different pre-college program types, highlighting how their funding models and operators influence the purpose of their selectivity and how they are ultimately interpreted by admissions officers.

Caption: Figure 1.2. Pre-college programs operate under structurally different incentives. Selectivity, cost, and branding serve different purposes depending on how a program is funded and governed—differences that are often obscured in marketing but matter in admissions interpretation.

1.3 Three Structurally Different Models Families Commonly Conflate

A critical source of misunderstanding in the pre-college space is the tendency to treat all selective programs as equivalent. In reality, selectivity can serve very different purposes depending on how a program is designed, funded, and governed.

Figure 1.3. Over time, the pre-college landscape has shifted from enrichment toward positioning, reshaping how families interpret the purpose and value of summer and supplemental experiences.

Model A: Public-Interest Access Programs

These programs are designed primarily to expand access and build readiness for students who might otherwise lack opportunity. Federal, state, and municipal education initiatives have long used summer programming as a tool for academic preparation and confidence-building, particularly for students from under-resourced schools (OECD, 2021).

They typically feature:

- Clear eligibility criteria (based on income, geography, school context, or other factors)

- Transparent public missions

- Modest or no cost to families

- Accountability to public funders or agencies

Their value lies in preparation, confidence-building, and skill development—not in comparative prestige. Admissions officers evaluate these programs within the context of opportunity rather than as competitive differentiators, consistent with NACAC’s guidance on contextual review.

Model B: Publicly Funded Prestige Programs

Some government-sponsored programs are intentionally designed as elite centers of excellence. Their purpose is not simply access, but the identification and development of top academic talent. These programs often function as state- or region-level flagships, concentrating advanced instruction that may exceed what students’ home schools can offer.

These programs are characterized by:

- Highly competitive admissions

- Intensive curricula that exceed typical secondary offerings

- Longstanding reputations and documented alumni outcomes

- Clear public accountability paired with academic rigor

Admissions officers recognize these programs as credible indicators of readiness, particularly when they replace or substantially exceed local curricular opportunities. Within context, they function as legitimate prestige vehicles—distinct from both access programs and tuition-driven selective offerings.

Model C: For-Profit or Hybrid Prestige Programs

A third category consists of tuition-dependent programs—some for-profit, some hybrid—that position themselves as selective and high-status. These programs vary widely in quality and intent.

Some offer meaningful instruction and mentorship. Others rely heavily on branding, selective language, and perceived affiliation. Research on private supplemental education markets has shown that selectivity language in these contexts is often decoupled from instructional rigor or evaluative depth (Bray, 2017).

Their admissions value depends not on how competitive they sound, but on how they are structured, evaluated, and contextualized.

Not all selective programs are alike. Some are designed to concentrate excellence; others are designed to scale enrollment. Their admissions value is not interchangeable.

Understanding this distinction is foundational to evaluating ROI—and to avoiding costly missteps.

Section 1 — References (APA Style)

Bray, M. (2017). Shadow education: Private supplementary tutoring and its implications for policy makers. UNESCO International Institute for Educational Planning. https://unesdoc.unesco.org/

Common Application. (2023). Application trends and volume reports. https://www.commonapp.org

National Association for College Admission Counseling. (2023). State of College Admission. https://www.nacacnet.org

OECD. (2021). Education at a Glance. https://www.oecd.org/education/

University of California Office of the President. (2023). Undergraduate admissions data. https://www.ucop.edu

U.S. Department of Education. (2022). Auxiliary and enrichment education programs: Oversight and transparency considerations. https://www.ed.gov

Section 2 — Why Families Misread Value

2.1 Prestige Bias in a High-Uncertainty Environment

When information is incomplete and stakes feel high, people rely on shortcuts. In behavioral economics, these shortcuts are known as heuristics—mental rules of thumb that simplify decision-making under uncertainty. In the pre-college context, prestige functions as one of the most powerful heuristics available.

Families are often asked to evaluate dozens—sometimes hundreds—of programs with limited transparency about instructional rigor, selectivity, or outcomes. Under these conditions, recognizable names, elite associations, and institutional branding provide cognitive relief. Research by Daniel Kahneman and Amos Tversky demonstrates that when individuals face complex choices with asymmetric information, they disproportionately rely on surface cues that feel trustworthy—even when those cues are weak predictors of underlying quality (Kahneman & Tversky, 1974).

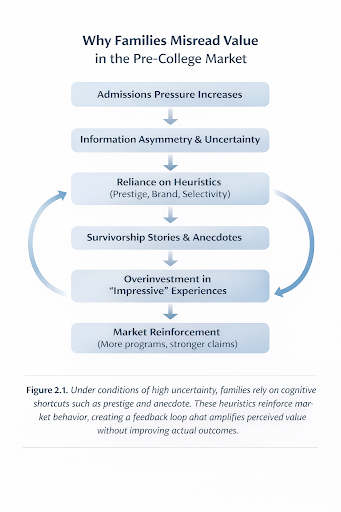

Figure 2.1. Under conditions of high uncertainty, families rely on cognitive shortcuts such as prestige and anecdote. These heuristics reinforce market behavior, creating a feedback loop that amplifies perceived value without improving actual outcomes.

In the pre-college marketplace, university names, geographic prestige, and academic language often substitute for substantive evaluation. This logic is understandable. It is also frequently misleading.

Prestige in branding does not reliably correlate with rigor in design, depth of instruction, or credibility in admissions review. Yet when families operate under uncertainty, brand recognition becomes a stand-in for analysis.

2.2 Survivorship Stories and the Myth of Replication

Another powerful distortion comes from survivorship bias—the tendency to focus on visible success stories while ignoring the far larger number of unseen counterexamples.

Families regularly hear anecdotes such as:

- “My neighbor did this program and got into Yale.”

- “Everyone from that institute ends up at top schools.”

- “This research program places students every year.”

Behavioral research shows that people systematically overweight these narratives because failures are less visible and less discussed. As Kahneman explains in Thinking, Fast and Slow, human judgment is especially vulnerable to vivid, emotionally resonant examples—even when they are statistically unrepresentative (Kahneman, 2011).

In admissions, this bias is particularly dangerous. Outcomes are multi-causal and context-dependent. Reducing them to a single experience creates a false sense of replicability. The belief that a program can be “copied” to reproduce a result leads families to over-invest in experiences that appear correlated with success but are not causally responsible for it.

This distortion is amplified in high-cost categories, where even one compelling anecdote can justify disproportionate financial and emotional investment.

2.3 The Allure of Selectivity Language

Selectivity is one of the most misunderstood concepts in the pre-college marketplace.

In undergraduate admissions, selectivity typically reflects constrained capacity combined with meaningful evaluation. In the summer and enrichment space, however, selectivity language is often unregulated and inconsistently defined. Terms such as “competitive,” “selective,” or “by application” can refer to anything from rigorous academic screening to basic enrollment management.

Research on consumer interpretation of selective labeling shows that people routinely conflate scarcity with quality—even in the absence of evidence about evaluation criteria (Thaler & Sunstein, 2008). In education markets, this leads families to assume:

- Lower acceptance rates equal higher value

- Competitive admissions imply instructional rigor

- Selectivity automatically translates into admissions advantage

None of these assumptions are reliably true.

Without transparency about how applicants are assessed—and for what purpose—selectivity functions as a marketing feature rather than a meaningful indicator of educational or admissions value.

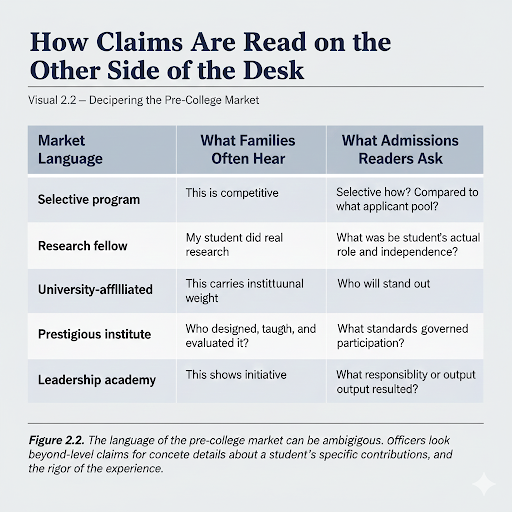

Figure 2.2. Pre-college marketing language often emphasizes prestige and selectivity. Admissions readers, by contrast, focus on role clarity, evaluation standards, and demonstrable growth.

2.4 Marketing Sophistication Outpacing Transparency

The modern pre-college market is highly professionalized. Program websites are polished, emotionally resonant, and optimized to speak directly to parental anxiety. Messaging frequently emphasizes urgency, scarcity, and future outcomes—sometimes subtly, sometimes explicitly.

Higher education researchers have documented how education-adjacent markets increasingly adopt commercial marketing strategies that prioritize conversion over clarity, particularly in high-anxiety decision environments (Bray, 2017). Common tactics include:

- Countdown deadlines and “limited seats”

- Tiered programs framed as merit-based progression

- Testimonials without context or comparison groups

- Faculty listings that obscure actual instructional involvement

- Scholarship language that implies selectivity while requiring payment

In many cases, marketing copy is more sophisticated than the underlying educational design. Families are asked to make high-stakes decisions based on aspirational language rather than explanatory detail.

This imbalance is not accidental. It reflects a market in which perception often drives enrollment more effectively than transparency.

2.5 Why Cost Distorts Perceived Value

Cost is another proxy families often use—sometimes unconsciously—to infer quality.

Behavioral economics research consistently shows that people associate higher prices with higher value, even when objective differences are minimal or nonexistent. This phenomenon, sometimes referred to as the price–quality heuristic, is well documented in consumer behavior research (Thaler & Sunstein, 2008).

In the pre-college context, high prices can create an illusion of rigor, exclusivity, or seriousness. When an experience requires significant financial investment, it feels more consequential—leading families to assume it must offer commensurate admissions value.

In reality, cost is a poor predictor of ROI. Some of the most developmentally meaningful experiences available to students are low-cost or free, particularly when supported by schools or public institutions. Conversely, some of the most expensive programs offer limited depth, minimal evaluation, and weak credibility in admissions review.

The danger is not overspending alone, but misallocating resources—financial, emotional, and temporal—based on flawed assumptions.

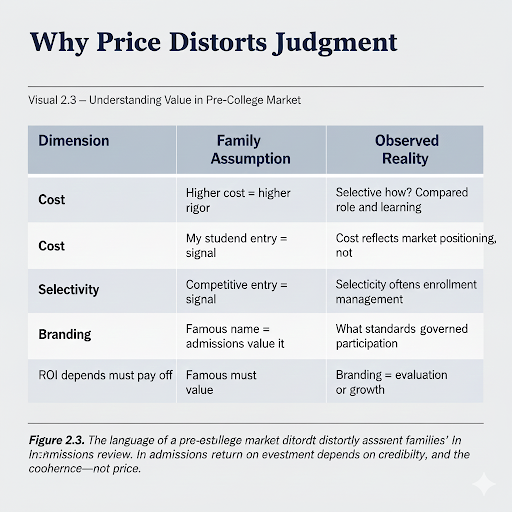

Figure 2.3. Cost and perceived prestige frequently distort families’ assessment of value. In admissions review, return on investment depends on development, credibility, and coherence—not price.

2.6 The Missing Lens: How Admissions Actually Reads These Experiences

Underlying all of these distortions is a fundamental gap between how families evaluate pre-college experiences and how admissions officers interpret them.

Families often ask:

- “Is this impressive?”

- “Will this stand out?”

- “Does this look competitive?”

Admissions readers ask different questions:

- “What did the student actually do?”

- “What changed as a result?”

- “Do I trust this evidence?”

- “How does this fit within the student’s context and trajectory?”

Research and professional guidance from admissions organizations consistently emphasize contextual evaluation, coherence, and credibility over sheer volume or prestige (NACAC, 2023). When families optimize for appearance rather than interpretation, they risk pursuing experiences that add noise rather than clarity to an application.

This gap—between perceived value and assessed value—is where misinvestment occurs.

The problem, then, is not a lack of opportunity. It is a lack of shared criteria.

To evaluate pre-college experiences responsibly, families need a framework that separates development from decoration, credibility from branding, and preparation from panic. The next section introduces that framework.

Section 2 — References (APA Style)

Bray, M. (2017). Shadow education: Private supplementary tutoring and its implications for policy makers. UNESCO International Institute for Educational Planning. https://unesdoc.unesco.org/

Kahneman, D. (2011). Thinking, fast and slow. Farrar, Straus and Giroux.

Kahneman, D., & Tversky, A. (1974). Judgment under uncertainty: Heuristics and biases. Science, 185(4157), 1124–1131. https://doi.org/10.1126/science.185.4157.1124

National Association for College Admission Counseling. (2023). State of College Admission. https://www.nacacnet.org

Thaler, R. H., & Sunstein, C. R. (2008). Nudge: Improving decisions about health, wealth, and happiness. Yale University Press.

Section 3 — Defining ROI in Admissions Terms

Before families can evaluate the return on pre-college investments, the term “ROI” itself needs to be clarified.

In financial contexts, return on investment implies a measurable relationship between inputs and outcomes. In college admissions, no such linear relationship exists. There is no ethical or defensible way to claim that participation in a specific program causes admission to a particular university.

Yet families are routinely encouraged—implicitly or explicitly—to think in those terms.

This project rejects that framing.

ROI in admissions is not about guarantees. It is about readiness: how experiences prepare a student to succeed academically, contribute meaningfully, and present a coherent, credible application. This definition aligns closely with how selective institutions describe holistic review in their own admissions guidance (NACAC, 2023; Harvard College Admissions, 2022).

3.1 What ROI Is Not

Before defining what matters, it is important to name what does not.

Admissions ROI is not:

- A promise of admission to any specific institution

- A ranking of programs by prestige or brand recognition

- A formula that can be replicated across students

- A substitute for coursework, grades, or long-term engagement

Figure 3.1. Return on investment in admissions is not about guarantees. It reflects how experiences contribute to growth, coherence, credibility, and long-term readiness.

National admissions organizations have repeatedly emphasized that extracurricular experiences are evaluated as contextual evidence, not as transactional credentials (National Association for College Admission Counseling, 2023). Selective institutions echo this stance, noting that no single experience—no matter how prestigious—can compensate for weak academic preparation or lack of sustained engagement.

Experiences function as evidence. They do not function as levers.

3.2 The Four-Pillar Admissions ROI Framework

To evaluate readiness responsibly, this project introduces a four-pillar framework that reflects how admissions decisions are actually made. No single pillar determines value on its own. High ROI emerges when multiple pillars reinforce one another.

Pillar 1: Growth ROI

Definition

Growth ROI measures the extent to which an experience produces meaningful academic, technical, or creative development beyond a student’s existing baseline.

This includes:

- Skill acquisition that can be demonstrated over time

- Increased intellectual complexity or independence

- Transferable competencies relevant to future coursework

Growth ROI is not about exposure. It is about progression.

Educational research consistently shows that depth of engagement and increasing challenge—not novelty—are what drive durable learning gains (OECD, 2021). Admissions officers look for evidence that a student did not simply attend an experience, but became more capable as a result.

Admissions reader question:

Did this student actually grow?

Pillar 2: Narrative ROI

Definition

Narrative ROI reflects the degree to which an experience clarifies, deepens, or advances a student’s academic throughline.

Selective admissions processes place significant weight on coherence—how a student’s interests, coursework, and activities fit together over time. This is not about specialization for its own sake, but about intelligibility. As admissions officers have noted publicly, strong applications tell a clear story about curiosity and direction rather than presenting disconnected accomplishments (Harvard College Admissions, 2022).

What strengthens narrative ROI

- Alignment with prior coursework or interests

- Logical progression rather than abrupt pivots

- Experiences that enable more specific, grounded essays and recommendations

What weakens it

- Prestige-driven detours

- Isolated “standout” experiences with no context

- Activities that contradict the student’s broader profile

Admissions reader question:

Does this make the application easier to understand—or harder?

Pillar 3: Credibility ROI

Definition

Credibility ROI evaluates whether an experience is interpreted by admissions readers as trustworthy, meaningful evidence of readiness beyond grades and test scores.

Credibility is not about how impressive an experience sounds. It is about whether an admissions officer can confidently believe what it represents.

Professional guidance on holistic review emphasizes the importance of verifiable context—clear standards, authentic evaluation, and adult observation—when interpreting extracurricular experiences (NACAC, 2023).

What strengthens credibility

- Selection processes involving real evaluation

- Transparent expectations and oversight

- Work products that can be contextualized or verified

- Recommendations from adults who directly observed growth

What weakens credibility

- Pay-to-play participation framed as selectivity

- Inflated titles or unverifiable claims

- Experiences designed primarily for résumé optics

Admissions reader question:

Do I trust this—and does it tell me something real about the student’s readiness?

Editorial note: Throughout this series, we use credibility rather than signal. Admissions officers are not decoding signals; they are assessing whether evidence is trustworthy.

Pillar 4: Wellbeing ROI

Definition

Wellbeing ROI measures whether an experience supports sustained motivation, mental health, and long-term engagement, rather than short-term optimization.

Research in adolescent development consistently shows that chronic overextension undermines learning, motivation, and performance over time (Lisa Damour, 2019). Experiences that appear impressive but accelerate burnout may weaken readiness—even if they look strong in isolation.

What supports wellbeing ROI

- Developmentally appropriate workload

- Student agency and intrinsic motivation

- Energy left for coursework, relationships, and rest

What undermines it

- Chronic stress or sleep deprivation

- Adult-driven participation

- Experiences pursued primarily out of fear

Admissions reader question:

Is this a student who can thrive here—or one who is already burning out?

3.3 Why These Pillars Must Be Evaluated Together

Each pillar captures a different dimension of readiness. None is sufficient on its own.

- High Growth ROI with low Credibility ROI may indicate real learning that is difficult for admissions readers to interpret.

- High Credibility ROI with low Growth ROI may reflect polished credentials with limited substance.

- Strong Narrative ROI without Wellbeing ROI may signal unsustainable over-optimization.

The strongest experiences:

- Build real skills

- Fit coherently into a student’s story

- Are trusted by admissions readers

- Are sustainable over time

This multi-pillar approach aligns with how holistic review is practiced in real admissions settings—where context, coherence, and credibility matter more than any single credential.

Figure 3.2. High-ROI experiences combine real growth with credible evaluation. Experiences that score highly on only one dimension often underperform in admissions review.

3.4 Visual Framework: Growth × Credibility

Throughout this series, we will frequently reference a simple conceptual matrix:

- High Growth / High Credibility — rare, high-ROI experiences

- High Growth / Low Credibility — valuable development with limited interpretive clarity

- Low Growth / High Credibility — credentials that look strong but add little substance

- Low Growth / Low Credibility — experiences best avoided

Figure 3.3. Return on investment emerges from alignment across pillars. Strength in one dimension cannot fully compensate for weakness in others.

This matrix is not a ranking system. It is a diagnostic tool—designed to help families evaluate proportion, not prestige.

With a shared definition of ROI in place, we can now begin applying this framework to the categories families encounter most often. The next section offers an early snapshot of high-ROI and low-ROI patterns—not as final judgments, but as a starting point for deeper analysis throughout the year.

Section 3 — References (APA Style)

Harvard College Admissions. (2022). How we read applications. https://college.harvard.edu/admissions/apply/what-we-look-for

National Association for College Admission Counseling. (2023). State of College Admission. https://www.nacacnet.org

OECD. (2021). Education at a Glance. https://www.oecd.org/education/

Damour, L. (2019). Under pressure: Confronting the epidemic of stress and anxiety in girls. Ballantine Books.

Section 4 — High-ROI vs. Low-ROI Categories

With a shared framework in place, families often ask the same question: Which kinds of experiences tend to be worth the investment—and which ones are not?

This section offers an early, provisional snapshot. It is not a ranking, and it is not a set of prescriptions. Context matters enormously. A high-ROI experience for one student may be low-ROI for another depending on prior preparation, access, interests, and goals.

The purpose here is to surface recurring patterns, not to issue verdicts.

Figure 4.1.

Pre-college categories vary widely across dimensions of return on investment. This snapshot reflects common patterns, not universal outcomes. Individual programs may perform above or below category averages depending on structure, rigor, and student context.

4.1 How to Read This Snapshot

Each category below is evaluated broadly across the four ROI pillars introduced in Section 3. Individual programs may perform better or worse than the category average, and many sit somewhere in between.

Two cautions apply throughout:

Eligibility is not advantage.

Programs restricted by geography, income, race, school context, or other structural factors are evaluated within opportunity—not compared across populations. Admissions guidance consistently emphasizes contextual review rather than cross-context competition (National Association for College Admission Counseling, 2023).

Prestige is not portable.

Selectivity only carries value when it reflects real evaluation and credible standards. Branding alone does not transfer admissions value across contexts or institutions.

Later installments in this series will examine many of these categories in depth, using data, case studies, and category-specific risks.

4.2 Categories That Often Deliver Higher ROI

(When aligned with the student’s profile)

Publicly Funded Prestige Programs

When designed as centers of excellence, publicly funded programs often score highly across Growth, Credibility, and Narrative ROI. These programs typically feature constrained capacity, intensive instruction, and transparent accountability—factors admissions officers recognize as credible indicators of readiness.

Research on selective public education initiatives suggests that their value lies less in comparison to peers and more in the academic stretch they provide relative to a student’s baseline (OECD, 2021). When such programs substitute for or exceed what a student’s home school can offer, their admissions value is legible within context.

Tier-1 University Summer Programs

Institutionally run programs with real academic expectations and limited enrollment can provide meaningful Growth ROI, particularly when coursework mirrors collegiate rigor. However, their Credibility ROI depends on instructional depth and evaluation—not merely on location or university branding.

Studies of pre-college university programs show wide variation in instructional intensity and assessment practices, reinforcing the need to distinguish between exposure-based models and academically evaluative ones (U.S. Department of Education, 2022).

Serious Competitions

Competitions with transparent criteria, external evaluation, and meaningful selectivity can offer strong Credibility and Narrative ROI—especially when engagement deepens over time.

Educational research on talent development consistently finds that competitions are most informative when they reflect progression, feedback, and increasing challenge rather than single-instance participation (OECD, 2021). The value lies in what the competition reveals, not simply that it occurred.

Independent Projects (Well-Supported)

Student-initiated projects with clear scope, sustained effort, and authentic output often deliver high Growth and Narrative ROI. Their credibility depends on documentation, mentorship, and evidence of increasing independence.

Admissions guidance has repeatedly emphasized that original work—when contextualized and supported by adult observation—can be among the clearest indicators of readiness and intellectual ownership (NACAC, 2023).

Arts Intensives (Aligned and Sustained)

For students with genuine artistic commitment, rigorous training environments can offer strong Growth and Wellbeing ROI, with Narrative ROI emerging over time. Research on arts education highlights the importance of sustained practice and feedback in producing meaningful development (OECD, 2021).

Short-term exposure programs, by contrast, tend to offer limited returns unless embedded in a longer trajectory.

Figure 4.2. Return on investment is driven less by category labels than by underlying design features such as evaluation, duration, and student agency.

4.3 Categories That Often Produce Mixed ROI

(Highly context-dependent)

Research Mentorships

Research experiences vary dramatically in structure and substance. Well-designed mentorships can produce substantial Growth ROI, but Credibility ROI depends on the student’s actual role, independence, and outputs.

Analyses of pre-college research programs show that admissions value hinges on whether students engage meaningfully in inquiry—or merely observe it (U.S. Department of Education, 2022).

Service and Leadership Programs

Programs focused on leadership or service can support growth and wellbeing when they are embedded in authentic community engagement and sustained responsibility. Short-term or performative models often underdeliver.

Research on youth leadership development consistently emphasizes depth, continuity, and local engagement over episodic experiences framed primarily for résumé value (OECD, 2021).

Language Immersion Programs

When immersive and sustained, language programs can produce real skill growth. However, short or tourism-adjacent models tend to offer limited Growth ROI and modest admissions relevance unless paired with continued study.

Internships

Internships vary widely based on responsibility, mentorship, and output. Title alone is not predictive of value. Admissions officers evaluate internships based on scope, independence, and evidence of contribution rather than affiliation alone (NACAC, 2023).

4.4 Categories That Frequently Deliver Lower ROI

(Despite high cost or strong marketing)

Pay-to-Publish Journals

Publications without peer review or editorial standards often weaken Credibility ROI and may raise questions in admissions review. Research on academic publishing norms makes clear that pay-to-publish models without evaluation do not function as credible indicators of scholarly readiness (OECD, 2021).

Conferences and “Leadership Academies”

Experiences centered on attendance rather than production or evaluation tend to offer limited Growth or Narrative ROI, regardless of cost or branding.

Tiered Upsell Programs

Programs designed to move students through multiple paid levels frequently prioritize retention over development. Studies of private supplemental education markets document how tiered progression often reflects revenue models rather than educational necessity (Bray, 2017).

MOOCs Used as Standalone Credentials

Online courses can support learning, but certificates alone rarely add admissions value without integration into a broader trajectory. Admissions guidance emphasizes application and synthesis over isolated credentialing (NACAC, 2023).

4.5 A Necessary Reminder

Low ROI does not mean “bad.” It means misaligned expectations.

The most common mistake families make is not choosing weak programs—it is expecting the wrong outcomes from them.

Figure 4.3. Misinterpreting category signals often leads families to over-invest in experiences that add little value while overlooking lower-cost, higher-impact alternatives.

If Section 4 describes patterns of value, Section 5 addresses patterns of risk. Some programs underdeliver unintentionally. Others are structured in ways that warrant closer scrutiny.

Section 4 — References (APA Style)

Bray, M. (2017). Shadow education: Private supplementary tutoring and its implications for policy makers. UNESCO International Institute for Educational Planning. https://unesdoc.unesco.org/

National Association for College Admission Counseling. (2023). State of College Admission. https://www.nacacnet.org

OECD. (2021). Education at a Glance. https://www.oecd.org/education/

U.S. Department of Education. (2022). Auxiliary and enrichment education programs: Oversight and transparency considerations. https://www.ed.gov

Section 5 — Red Flags and Predatory Patterns

The pre-college marketplace is not uniformly exploitative. Many programs are thoughtfully designed and genuinely valuable. However, as the market has expanded rapidly—and with limited external regulation—certain structural patterns recur across programs that consistently underdeliver or mislead.

This section is not about naming or shaming. It is about helping families recognize risk signals before committing time, money, or emotional energy.

5.1 Common Red Flags to Watch For

Guaranteed or Implied Outcomes

Any language suggesting that participation will “lead to,” “position,” or “result in” admission at specific universities should be treated with skepticism.

Admissions outcomes are inherently multi-causal. National admissions organizations, including the National Association for College Admission Counseling, have repeatedly emphasized that no single experience can guarantee or predict admission outcomes (NACAC, 2023). Programs that imply otherwise—explicitly or through suggestive testimonials—are misrepresenting how admissions decisions work.

From a consumer-protection standpoint, implied guarantees are a well-documented risk marker in high-anxiety education markets. Regulatory guidance from the Federal Trade Commission warns that outcome-based marketing claims, particularly those relying on anecdote rather than aggregate data, frequently mislead consumers (FTC, 2022).

Selectivity Without Transparency

Selectivity is meaningful only when it reflects real evaluation.

Programs that emphasize competitiveness but do not disclose how applicants are assessed—or what proportion are admitted—offer little basis for evaluating educational or admissions value. Research on private supplemental education markets shows that selective language is often used to manage demand rather than to signal rigor (Bray, 2017).

Without transparency, selectivity becomes a branding device rather than a substantive feature.

Families should be able to answer:

- What criteria are used in admissions decisions?

- Who evaluates applications?

- What distinguishes accepted from rejected applicants?

If these questions cannot be answered clearly, selectivity claims should be discounted.

Nonprofit Branding Without Public Accountability

Nonprofit status does not guarantee rigor, oversight, or educational quality.

While many nonprofit programs deliver meaningful value, research and regulatory reviews have documented cases in which nonprofit branding masks tuition-dependent business models with limited accountability or transparency (U.S. Department of Education, 2022). Families may assume nonprofit status implies public mission or academic governance when, in practice, program operations resemble those of private vendors.

Indicators of genuine accountability include:

- Public reporting on mission and outcomes

- Independent oversight or affiliation

- Clear articulation of educational purpose beyond enrollment growth

Absent these features, nonprofit designation alone should not be treated as a proxy for value.

Inflated Titles and Ambiguous Roles

Titles such as “research fellow,” “associate,” “executive,” or “director” are increasingly common in pre-college programs. Without clear definition, these labels can obscure rather than clarify a student’s actual responsibilities.

Admissions guidance emphasizes that readers look past titles to understand scope, independence, and contribution (NACAC, 2023). When titles are inflated or loosely applied, they weaken Credibility ROI by making experiences harder—not easier—to interpret.

Families should ask:

- What did the student actually do?

- How much independence did they have?

- Who evaluated their work?

If titles substitute for substance, credibility suffers.

Faculty Listings That Obscure Involvement

Many programs list impressive faculty affiliations without clarifying instructional roles. Advisory boards, guest speakers, or nominal oversight are not equivalent to direct teaching or mentorship.

Analyses of university-affiliated enrichment programs have noted that faculty names are frequently used for credibility signaling even when involvement is limited or indirect (U.S. Department of Education, 2022).

Transparency matters. Families should be able to determine:

- Who teaches day-to-day?

- Who evaluates student work?

- How accessible mentors actually are?

Tiered Upsells Framed as Merit

Programs that move students through multiple paid levels—often framed as “advancement,” “invitation,” or “honors” tiers—should be evaluated carefully.

Research on shadow education markets shows that tiered progression frequently reflects revenue optimization rather than educational necessity (Bray, 2017). While some tiered models support genuine development, others primarily serve to extend enrollment duration.

Families should distinguish between:

- Skill-based progression tied to evaluation

- Enrollment-based progression tied to payment

Only the former reliably supports ROI.

Publications Without Review or Standards

Pay-to-publish journals or conferences that lack peer review, editorial standards, or rejection rates often undermine rather than enhance credibility.

In academic contexts, publication value derives from evaluation—not visibility. Educational research on scholarly readiness emphasizes process, feedback, and revision over mere appearance of publication (OECD, 2021).

Admissions readers are familiar with the distinction. Publications without standards may raise questions about judgment and guidance rather than signaling readiness.

5.2 A Simple Rule of Thumb

If a program relies more on how it sounds than on what students actually do, families should pause.

Checklist:

If you encounter three or more of the following—opaque selectivity, outcome-based marketing, inflated titles, unverifiable faculty involvement, or upsell-driven progression—it is worth reconsidering.

Recognizing red flags is necessary—but not sufficient. Families also need to understand how admissions officers interpret these experiences once they appear on an application.

The next section addresses that interpretive lens directly.

Section 5 — References (APA Style)

Bray, M. (2017). Shadow education: Private supplementary tutoring and its implications for policy makers. UNESCO International Institute for Educational Planning. https://unesdoc.unesco.org/

Federal Trade Commission. (2022). Advertising and marketing compliance guidance. https://www.ftc.gov

National Association for College Admission Counseling. (2023). State of College Admission. https://www.nacacnet.org

OECD. (2021). Education at a Glance. https://www.oecd.org/education/

U.S. Department of Education. (2022). Auxiliary and enrichment education programs: Oversight and transparency considerations. https://www.ed.gov

Section 6 — How Admissions Officers Actually Interpret These Experiences

One of the most persistent myths in the pre-college marketplace is that admissions officers are impressed by the sheer number, cost, or novelty of a student’s experiences. In reality, admissions readers approach applications with a different lens—one shaped by context, credibility, and pattern recognition.

Admissions officers are not tallying programs.

They are evaluating evidence.

This distinction is not anecdotal. Institutional admissions guidance consistently emphasizes that extracurricular experiences are interpreted as contextual indicators of preparation, not as credentials that function independently of coursework, recommendations, and academic trajectory (National Association for College Admission Counseling, 2023).

6.1 What Tends to Get Attention

Across institutions, admissions readers respond most consistently to experiences that demonstrate depth, clarity, and trustworthiness.

Demonstrable Growth Over Time

Experiences that show progression—greater independence, increasing complexity, or deeper responsibility—are more compelling than one-off achievements. Admissions guidance from selective institutions repeatedly stresses growth over participation, noting that sustained engagement provides far more insight into readiness than isolated accomplishments (Harvard College Admissions, 2022).

Readers look for evidence that a student did not simply attend an experience, but developed as a learner or contributor.

Concrete Output or Impact

Artifacts matter. This may include:

- Research contributions that can be described with specificity

- Creative work that reflects technical or conceptual development

- Projects that resulted in something tangible, sustained, or externally engaged

Output does not need to be published or public-facing, but it must be real. Institutional guidance on holistic review emphasizes that concrete work products help admissions readers contextualize claims and assess substance (NACAC, 2023).

Contextual Difficulty

Admissions officers interpret experiences within the context of a student’s school, geography, and access. An opportunity that stretches beyond what a school typically offers carries different weight than one that replicates existing resources.

This approach is consistent with NACAC’s long-standing emphasis on contextual evaluation—assessing achievement relative to opportunity rather than through direct peer comparison.

Credible Adult Evaluation

Recommendations from teachers, mentors, or supervisors who directly observed a student’s work often carry more interpretive weight than the experience itself. Admissions officers rely heavily on adult testimony to verify growth, initiative, and intellectual engagement (Harvard College Admissions, 2022).

In practice, credible evaluation often matters more than program branding.

6.2 What Often Disappears on the Page

Just as important is understanding what does not register meaningfully in admissions review.

Passive Participation

Experiences centered on attendance—lectures, workshops, conferences—without clear responsibility or output tend to blur together, regardless of cost or location. Admissions readers have limited time and rely on substance to differentiate applications.

Generic Prestige Claims

Statements such as “selected for a competitive program” or “participated in a prestigious institute” offer little value without explanation of selection criteria, expectations, or outcomes. Admissions guidance makes clear that unsupported prestige claims do not substitute for evidence (NACAC, 2023).

Overstuffed Résumés

Long activity lists filled with short-term, disconnected experiences often weaken rather than strengthen an application. Volume can obscure focus and raise questions about depth—a concern admissions officers have noted publicly across multiple institutions.

Inflated Titles Without Substance

Titles alone are not persuasive. Admissions readers look past labels to understand what a student actually did, how independently they worked, and what changed as a result. Inflated or ambiguous titles can undermine Credibility ROI by making experiences harder to interpret.

6.3 How Admissions Officers Read Selectivity

Selectivity is not meaningless—but it is contextual.

Admissions officers distinguish between:

- Selectivity that reflects constrained capacity and real evaluation

- Selectivity language used primarily for marketing or enrollment management

Without transparency about how applicants are assessed, selectivity claims carry limited interpretive weight. Conversely, programs with clearly articulated standards—even when unfamiliar to families—often earn trust more quickly in review (NACAC, 2023).

Structure matters more than branding.

6.4 Interpreting Public, Restricted, and Prestige Programs

Eligibility-Restricted Programs

Programs limited by income, geography, race, school type, or other structural factors are evaluated within opportunity context. Admissions officers do not compare these programs across populations, nor do they treat participation as a competitive “edge.”

Importantly:

- Participation is not seen as an advantage over ineligible peers

- Absence is never a disadvantage

- Value lies in preparation and growth, not comparison

This aligns with NACAC guidance on equity-aware holistic review.

Publicly Funded Prestige Programs

Some government-sponsored programs are intentionally designed as elite academic environments. Admissions officers recognize these as rigorous when they provide opportunities that substantially exceed what a student’s home school can offer.

In these cases, selectivity and intensity are legible—and credibility is high.

For-Profit and Hybrid Programs

Admissions officers do not dismiss tuition-funded programs outright. However, they evaluate them skeptically when:

- Selectivity is unclear

- Instructional involvement is opaque

- Outcomes are overstated

Credibility in this category depends entirely on substance, not price or branding—a distinction echoed in both admissions guidance and research on supplemental education markets (Bray, 2017).

6.5 What Raises Quiet Questions

Certain patterns do not automatically harm an application, but they often prompt closer scrutiny.

These include:

- Heavy reliance on pay-to-play credentials

- Publications without editorial standards

- Leadership titles without scope or responsibility

- Repeated short-term programs without progression

- Experiences that appear adult-directed rather than student-driven

These patterns raise interpretive uncertainty—not moral judgment. Admissions readers are asking whether the evidence presented is reliable and representative of the student’s readiness.

6.6 Composite Case Comparisons

To illustrate how interpretation works in practice, consider two composite examples drawn from repeated counseling and admissions-reading patterns.

Figure 6.1 Admissions officers interpret experiences based on depth, clarity, and credibility, not price or branding.

Case A: High Cost, Low Impact

A student attends multiple well-branded summer programs, each lasting one to two weeks. Descriptions emphasize selectivity and location, but provide limited detail about work produced or skills gained. Recommendations mention participation but not growth.

Admissions interpretation:

The experiences are difficult to differentiate and add little clarity about the student’s readiness or direction.

Case B: Modest Cost, High Impact

A student spends a summer developing an independent project aligned with prior coursework, supported by a teacher mentor. The project evolves over time, results in a clear artifact, and is referenced specifically in a recommendation.

Admissions interpretation:

The experience demonstrates initiative, depth, and intellectual ownership—even without external branding.

6.7 The Core Misalignment

Families often evaluate experiences based on how impressive they appear in isolation. Admissions officers evaluate them based on how clearly they demonstrate growth, direction, and trustworthiness within context.

This misalignment explains why families can spend heavily and still feel uncertain—and why some students with modest resources present unusually compelling applications.

Key Takeaway

Admissions officers evaluate opportunity, not just accomplishment. They are looking for credible evidence that a student is ready to engage deeply, sustainably, and authentically in an academic environment. Understanding this interpretive lens is essential to making proportionate, high-ROI decisions.

Section 6 — References (APA Style)

Bray, M. (2017). Shadow education: Private supplementary tutoring and its implications for policy makers. UNESCO International Institute for Educational Planning. https://unesdoc.unesco.org/

Harvard College Admissions. (2022). How we read applications. https://college.harvard.edu/admissions/apply/what-we-look-for

National Association for College Admission Counseling. (2023). State of College Admission. https://www.nacacnet.org

Section 7 — A Decision Framework for Families

By this point, many families can recognize misalignment in the abstract—but still struggle when faced with a concrete decision. A program looks promising. Deadlines are approaching. Testimonials are persuasive. The fear of “missing out” resurfaces.

This section is designed to slow that moment down.

Rather than asking families to become experts in every program category, the goal is to provide a repeatable decision framework—one that can be applied across summer programs, research opportunities, competitions, and beyond.

Decision-science research consistently shows that structured frameworks reduce impulsive choices in high-stakes, emotionally charged environments (Daniel Kahneman, 2011). The questions below are not meant to eliminate ambiguity. They are meant to restore proportion.

Figure 7.1 Strong programs align with goals, involve real educators, yield clear growth, and cost proportionate time or money. When programs fall short on any two dimensions, the value is likely overstated.

7.1 Start With Growth, Not Branding

Before considering prestige, selectivity, or outcomes, families should ask a more basic question:

What will my student actually learn or be able to do as a result of this experience?

Concrete prompts:

- What specific skills will be developed?

- How will the work become more complex over time?

- What evidence of growth will remain when the program ends?

Educational research consistently finds that learning outcomes are driven by depth, challenge, and feedback—not by novelty or affiliation (OECD, 2021). If a program cannot articulate how a student will change—academically, technically, or creatively—it is unlikely to deliver meaningful Growth ROI.

7.2 Look for Real Output, Not Just Participation

Experiences with the strongest ROI typically leave behind something tangible. This does not need to be public, published, or polished—but it does need to be real.

Ask:

- Will my student produce a project, paper, performance, or prototype?

- Will they be able to describe their work with specificity?

- Could a mentor or teacher speak concretely about what they did?

Admissions guidance emphasizes that concrete outputs help readers assess substance and credibility, particularly when evaluating experiences outside formal coursework (National Association for College Admission Counseling, 2023).

Without output, even well-intentioned experiences are difficult to interpret.

7.3 Evaluate Credibility Through Structure, Not Language

Marketing language is easy to copy. Structure is harder to fake.

Families should look past words like “selective,” “elite,” or “competitive” and ask:

- How are participants actually evaluated?

- Who provides instruction or mentorship—and how involved are they?

- Is there transparency about expectations and standards?

Research on consumer decision-making shows that people often over-weight persuasive language when structural information is missing (Thaler & Sunstein, 2008). Credibility ROI depends less on how impressive a program sounds and more on whether its design allows admissions readers to trust what it represents.

7.4 Test Narrative Alignment

Every experience does not need to advance a single academic interest—but the overall pattern should make sense.

Ask:

- Does this experience build on prior interests or coursework?

- Will it help clarify direction—or muddy it?

- Does it fit naturally into how my student talks about their curiosity?

Admissions officers consistently emphasize coherence over scattershot accomplishment. Applications are read as narratives, not inventories (Harvard College Admissions, 2022).

Experiences that disrupt a student’s trajectory often weaken applications, even when they are impressive in isolation.

7.5 Consider Opportunity Cost Explicitly

One of the most overlooked aspects of ROI is what a program displaces.

Families should ask:

- What academic, personal, or restorative time will this replace?

- What am I saying no to in order to say yes to this?

- Is this the best use of this particular season?

Decision-science research shows that people systematically under-account for opportunity cost when choices are framed around gain rather than tradeoff (Kahneman, 2011). Naming the tradeoff explicitly leads to more proportionate decisions.

7.6 Assess the Stress Ceiling Honestly

An experience can be rigorous without being corrosive.

Questions to consider:

- Is the workload appropriate for my student’s age and season?

- Is participation driven by genuine interest or external pressure?

- Will my student have energy left for school, relationships, and rest?

Research in adolescent development consistently shows that chronic overextension undermines motivation, learning, and wellbeing over time (Lisa Damour, 2019). Wellbeing ROI is not a luxury consideration—it is foundational to readiness.

7.7 A Simple Flow Test

Families often find it helpful to apply a quick diagnostic before enrolling:

- If this program disappeared tomorrow, would my student still be pursuing this interest?

- If admissions outcomes were removed from the equation, would this still feel worthwhile?

- If we had to explain this experience without naming the program, would it still sound compelling?

If the answer to all three is no, it is worth reconsidering.

This type of reframing aligns with evidence-based decision strategies that reduce regret and hindsight bias (Thaler & Sunstein, 2008).

7.8 Reframing the Goal

The goal of pre-college experiences is not to look impressive. It is to become prepared.

Prepared to:

- Engage deeply in advanced coursework

- Take intellectual risks

- Sustain effort over time

- Articulate genuine interests with clarity

When families use this framework consistently, decisions become calmer, more confident, and more proportionate.

Good decisions require not only clear criteria, but trust in the process behind the guidance. The next section explains how this series evaluates pre-college categories throughout the year—what data we use, what patterns we examine, and what we deliberately avoid claiming.

Section 7 — References (APA Style)

Damour, L. (2019). Under pressure: Confronting the epidemic of stress and anxiety in girls. Ballantine Books.

Harvard College Admissions. (2022). How we read applications. https://college.harvard.edu/admissions/apply/what-we-look-for

Kahneman, D. (2011). Thinking, fast and slow. Farrar, Straus and Giroux.

National Association for College Admission Counseling. (2023). State of College Admission. https://www.nacacnet.org

OECD. (2021). Education at a Glance. https://www.oecd.org/education/

Thaler, R. H., & Sunstein, C. R. (2008). Nudge: Improving decisions about health, wealth, and happiness. Yale University Press.

Section 8 — Methodology & Scope

The pre-college marketplace is crowded with claims, testimonials, and implied guarantees. This project takes a different approach.

The Pre-College ROI Project does not rank programs, publish acceptance probabilities, or claim causal links between specific experiences and admissions outcomes. Such claims are neither ethical nor supported by reliable data. National admissions organizations and higher education researchers have consistently cautioned against attributing admissions outcomes to single factors or isolated experiences (National Association for College Admission Counseling, 2023).

Instead, this series evaluates pre-college experiences using a consistent, evidence-informed framework grounded in how admissions decisions are actually made and how students actually develop.

8.1 Our Evidence Base

Each monthly installment draws on four complementary sources of evidence. No single source is treated as definitive; credibility comes from convergence.

1. Public Data and Institutional Reporting

We analyze application trends, enrollment pressure, and admissions practices using publicly available data from:

- National application platforms

- Public university systems

- Higher education policy and research organizations

Sources such as the Common Application, the University of California Office of the President, and the Organisation for Economic Co-operation and Development provide macro-level context on how competition, access, and opportunity have changed over time.

These data inform why the pre-college market expanded—but are not used to infer individual outcomes.

2. Admissions Reading Practices and Institutional Guidance

Interpretive claims in this series are anchored to how institutions describe their own processes. This includes:

- Admissions office publications

- Public statements by admissions leaders

- Professional guidance on holistic review

Guidance from NACAC and from selective institutions consistently emphasizes contextual evaluation, coherence, and credibility over credential accumulation. These sources inform how experiences are read, not how they are marketed.

3. Comparative Case Analysis (Pattern-Based)

Where appropriate, we use anonymized, composite case comparisons drawn from repeated counseling and admissions-reading patterns. These cases are:

- Non-identifying

- Non-predictive

- Used to illustrate interpretation rather than outcome

This approach aligns with qualitative research methods commonly used in education policy analysis, where pattern recognition helps explain decision processes without claiming causality (OECD, 2021).

4. Developmental and Learning Science

Claims related to growth, motivation, stress, and sustainability are grounded in research from:

- Adolescent development

- Educational psychology

- Learning science

Work by scholars such as Lisa Damour and large-scale syntheses from the OECD inform how we evaluate Wellbeing ROI and long-term readiness.

8.2 What This Project Can—and Cannot—Claim

Clarity about scope is essential to credibility.

This project can:

- Identify recurring patterns of over- and under-investment

- Explain how admissions officers interpret categories of experiences

- Evaluate alignment between program design and student development

- Offer practical frameworks for decision-making

This project cannot:

- Guarantee admissions outcomes

- Attribute success to a single experience

- Rank programs universally

- Eliminate uncertainty from the admissions process

Higher education research consistently shows that admissions decisions are multi-factorial and institution-specific. Any framework that promises certainty is misrepresenting the process it claims to explain.

8.3 The Analytical Lens (Consistency Across the Series)

All experiences in this series are evaluated using the same four-pillar framework introduced in Section 3:

- Growth ROI

- Narrative ROI

- Credibility ROI

- Wellbeing ROI

This lens remains constant across categories and months. Consistency allows insights to compound rather than reset, enabling readers to compare categories meaningfully over time.

We deliberately avoid changing criteria to fit categories. If a category performs unevenly across pillars, that unevenness is part of the finding.

8.4 Transparency, Ethics, and Limits

This project is offered as a public resource. It does not accept sponsorship from pre-college program providers, nor does it promote paid pathways as inherently superior.

We do not claim comprehensive coverage of all programs or regions. Instead, we focus on:

- Common categories families encounter

- Patterns that recur across contexts

- Decision errors that consistently lead to misallocation

Where evidence is incomplete or mixed, we say so.

Trust in guidance depends not on certainty, but on honesty about what can and cannot be known.

With the methodology established, the final sections of this installment turn outward—explaining how this framework will be applied across the year, and how readers can engage with the series as it unfolds.

Section 8 — References (APA Style)

Common Application. (2023). Application trends and volume reports. https://www.commonapp.org

National Association for College Admission Counseling. (2023). State of College Admission. https://www.nacacnet.org

OECD. (2021). Education at a Glance. https://www.oecd.org/education/

University of California Office of the President. (2023). Undergraduate admissions data. https://www.ucop.edu

Damour, L. (2019). Under pressure: Confronting the epidemic of stress and anxiety in girls. Ballantine Books.

Section 9 — What We’ll Study This Year

This January installment establishes the analytical foundation for The Pre-College ROI Project. Over the coming year, we will apply the same framework—without modification—to the pre-college categories families encounter most often.

Each monthly issue examines one category at a time, using a consistent structure so insights can be compared across domains rather than evaluated in isolation.

The goal is not to crown “best” programs, but to clarify where value is commonly misunderstood, overstated, or overlooked—and for whom.

9.1 The 2026 Pre-College ROI Series Roadmap

The remaining installments in this series are organized as follows:

- Issue 2 — Research Programs: Prestige vs. Pay-to-Play

Evaluating lab-based research, mentorship models, and publication pathways, with attention to role clarity, independence, and credibility. - Issue 3 — Competitions: Distinction, Depth, and Signal Saturation

Analyzing academic, creative, and STEM competitions through selectivity, progression, and evaluative rigor. - Issue 4 — Tier-1 Summer Programs: What They Reward (and What They Don’t)

Examining university-run summer programs, including how admissions readers interpret coursework, grades, and institutional context. - Issue 5— Internships & “Research Mentorships”

Distinguishing responsibility from shadowing, learning from labeling, and access from advantage. - Issue 6 — Service & Leadership Experiences

Separating authentic community engagement from performative leadership models and résumé-driven service. - Issue 7 — Arts & Performance Pathways

Evaluating conservatories, intensives, portfolios, and auditions through growth and sustainability lenses. - Issue 8 — STEM Certifications, MOOCs, and Micro-Credentials

Assessing when credentials support readiness—and when they function primarily as decorative add-ons. - Issue 9 — Global & Language Programs

Interpreting immersion, exchange, and international programs in context of access, duration, and real proficiency development. - Issue 10 — Entrepreneurship & Startup Programs

Analyzing pitch competitions, incubators, and “founder” experiences for substance versus simulation. - Issue 11— Independent Projects & Passion-Driven Work

Examining student-initiated work as a high-variance but often high-ROI category when properly scoped and supported. - Issue 12 — The Real ROI Report: Findings Across the Year

A synthesis of patterns, misalignments, and high-impact decision rules observed across categories.

9.2 What Each Installment Will Include

Every issue in the series follows the same scaffold:

- Landscape Snapshot

What families are told, what they believe, and what the market emphasizes. - Data & Research Context

Relevant admissions data, institutional guidance, or educational research. - Risk Analysis

Common misrepresentations, predatory structures, or inflated claims. - When the Category Works—and for Whom

Contextual analysis by student profile, access, and developmental stage. - Case Comparisons

Composite examples illustrating interpretation, not prediction. - Family Decision Tools

Checklists, diagnostic questions, and tradeoff frameworks. - Lower-Cost or Alternative Pathways

Options that deliver similar growth or credibility with fewer resources.

This consistency is intentional. It allows families, counselors, and advisors to build judgment over time rather than recalibrating expectations with each new opportunity.

9.3 How Readers Can Engage With the Series

This project is designed to be used flexibly.

Some families may read only the sections relevant to an upcoming decision. Others may follow the series sequentially. Counselors and advisors may return to specific issues as reference tools during planning conversations.

We also invite readers to submit:

- Categories they find confusing

- Claims they would like unpacked

- Tradeoffs they are struggling to evaluate

These submissions help shape future installments and ensure the analysis remains grounded in real decision-making contexts.

9.4 A Note on What This Series Is Not

This series does not promise shortcuts. It does not offer rankings, endorsements, or admissions guarantees. It does not attempt to collapse a complex, human process into formulas or probability tables.

Instead, it offers something quieter—and more durable: shared criteria.

Across a fragmented and emotionally charged market, families deserve tools that help them distinguish:

- Development from decoration

- Credibility from branding

- Preparation from panic

The final section returns to this idea—and to the broader purpose of the project as a public resource.

Section 10 — Conclusion & Invitation

The modern pre-college marketplace rewards speed, branding, and accumulation. Admissions does not.

Students are not admitted because they did everything. They are admitted because their applications demonstrate readiness: intellectual growth, direction over time, credible evidence of engagement, and the capacity to thrive in a demanding academic environment.

The gap between those two systems—the market and the review process—is where families most often misallocate time, money, and energy.

This project exists to narrow that gap.

Across the coming year, The Pre-College ROI Project will examine the most common pre-college pathways using a consistent lens. Not to judge families’ choices after the fact, but to help future decisions feel calmer, more proportionate, and better informed. Some categories will prove more valuable than families expect. Others will reveal diminishing returns. Many will depend heavily on context.

What matters most is not choosing the “right” program, but understanding why an experience adds value—or doesn’t—given a particular student’s interests, access, and stage of development.

Clarity changes how decisions feel. When families have shared criteria, they are less vulnerable to urgency, anecdote, and prestige bias. They are more likely to invest in experiences that build real capability, protect curiosity, and support long-term growth.

This journal is offered as a public resource. It is not a substitute for judgment, nor is it a guide to optimization. It is an invitation to think more carefully about tradeoffs—and to resist the idea that more is always better.

If you find this framework useful, you’re welcome to follow the series as it unfolds. Each installment builds on the last, adding depth and specificity without changing the underlying standards.

And if at any point you want a second set of eyes on a plan, a claim unpacked, or a tradeoff talked through, our team is available as a resource. Not because there is a single correct path—but because thoughtful preparation is easier when families are not navigating alone.

Clarity, not urgency, is the real advantage.